I was honored to be a panelist on the television show White House Chronicle, which was broadcast last weekend, and in what I believe to be a somewhat unusual step, is being re-broadcast again this weekend. The show is also published online at the show’s website, White House Chronicle.

I had a total blast doing that show! I was very honored to be invited, and I was immensely impressed with the outstanding professionalism and integrity of everyone associated with WHC. And the other panelists – Bob Franken and Lauren Ashburn – are top notch in their respective areas of expertise and beyond. I was definitely with a very illustriuous group and it was a great privilege to be included.

One surprise to me was how incredibly funny everyone was! Llewellyn King is to be credited for a good-natured spirit that he instills in the show, a process that begins long before the show’s taping and made the entire experience very fun for me. It’s to his credit that the show is both relaxed and energetic at the same time, a rare combination that’s hard to foster, yet Llewellyn King makes it look easy. He’s well established as one of the most respected journalists in the business, but I’m not sure everyone is fully aware of what a great wit and fun individual he is.

Linda Gasparello exhibits great insight, and I know her to be quite innovative. Both she and Llewellyn are in tune with the quickly evolving state of technical changes in the industries of media and broadcasting, and the world at large. They recognize the current changes before other mainstream news outlets, and more than that, they recognize the potential implications in the future. Yet they are gracious enough to allow their guest panelists to share the spotlight. Each one of us had valuable contributions to share, all of which is to the credit of Mr. King and Ms. Gasparello.

Speaking of my fellow panelists, Bob Franken needs no introduction to news “consumers” like me, he’s one of the most accomplished journalists in the industry with impeccable credentials, not to mention a fantastic personality off camera as well. His insight into the state of technology goes far beyond what was able to be represented in this brief but power-packed broadcast, it was evident to me from the moment we all arrived at the studio that he’s on top of the major trends and more importantly – understands their implications and how best to navigate the future. And if Bob and Llewellyn ever decide to do a stand-up comedy act, I’ll be the first in line to buy tickets.

Lauren Ashburn is one of those remarkable and rare people to be a visionary and also to do something about it. She recognized the power of video on the web before most in the biz and oversaw USA Today’s work in that area while others were still figuring out what was going on. Many people talk the talk, Lauren is one of the rare few who have impacted the industry for the better in substantial ways. And if you happened to notice how much the camera loves her – and it does, everybody knows that – I can tell you this: she is even more of a babe in person. Yes, it is possible, I’m a witness.

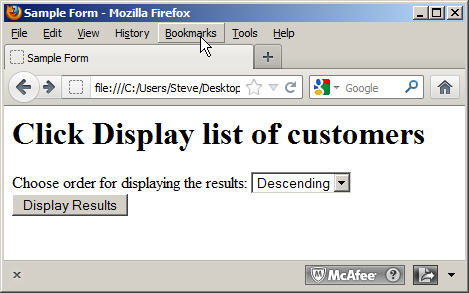

If you view the program, you’ll see that I had a camcorder running during the broadcast itself. I began filming several minutes before the show began, and caught some interesting discussion before and after what you see in the broadcast. I’ll be coordinating the publication of that footage with the good folks at WHC in the very near future. Keep an eye out for it, and in the meantime, if you wish to be notified when it’s up, just follow me on Twitter, or send me a request via our online form, and I’ll be sure to let you know.

I’ve received a number of requests for an electronic copy of the SQL scripts in my book, OCA Oracle Database SQL Expert Exam Guide (Exam 1Z0-047). I’ve emailed them to readers who have asked. And I’m probably overdue for publishing them here in this blog. So … now you can!

I’ve received a number of requests for an electronic copy of the SQL scripts in my book, OCA Oracle Database SQL Expert Exam Guide (Exam 1Z0-047). I’ve emailed them to readers who have asked. And I’m probably overdue for publishing them here in this blog. So … now you can!